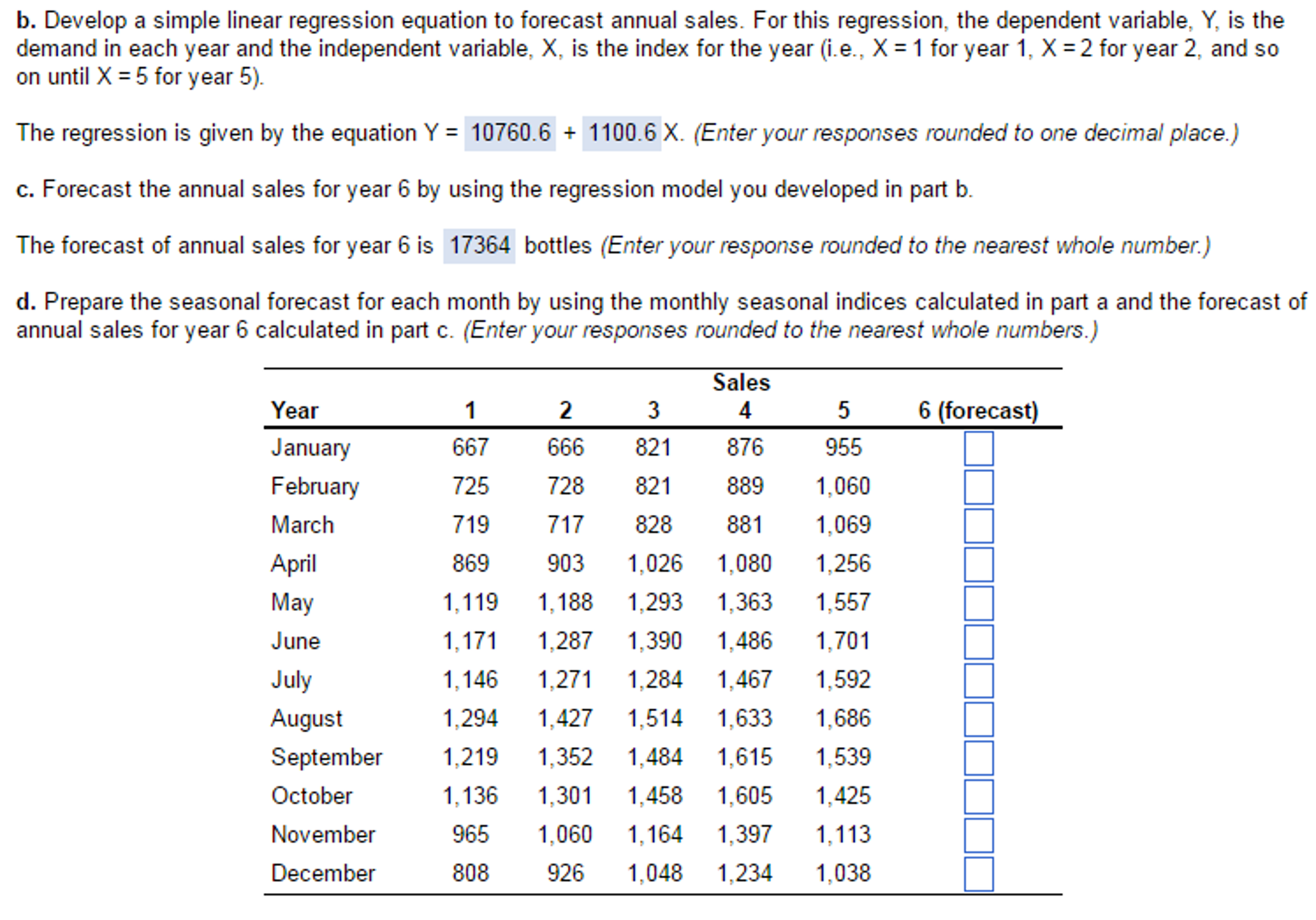

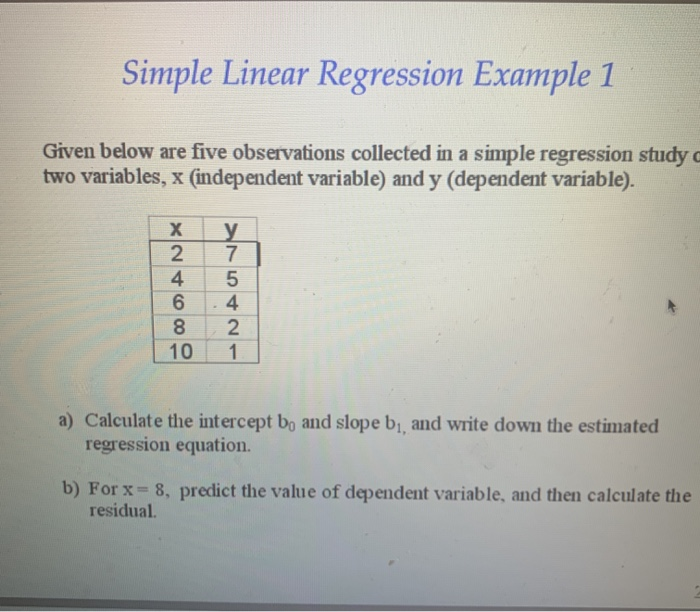

How to find the estimated simple linear regression equation

Minimizing the SSR is a desired result, since we want the error between the regression function and sample data to be as small as possible. Based on the model assumptions, we are able to derive estimates on the intercept and slope that minimize the sum of squared residuals (SSR). The model assumptions listed enable us to do so. Ordinary Least Squares (OLS)Īs mentioned earlier, we want to obtain reliable estimators of the coefficients so that we are able to investigate the relationships among the variables of interest. The Gauss-Markov assumptions guarantee the validity of Ordinary Least Squares (OLS) for estimating the regression coefficients.

Exogeneity: The disturbance term has an expected value of zero given any value of the independent variable.No perfect collinearity: None of the independent variables is constant, and there are no exact linear relationships among the independent variables.Random sample: We have a random sample of size n, where the observations are independent of each other.Linearity: The relationship between the dependent variable, independent variable, and the disturbance is linear.There are five assumptions associated with the linear regression model (these are called the Gauss-Markov assumptions): To be able to get reliable estimators for the coefficients and to be able to interpret the results from a random sample of data, we need to make model assumptions.

In simple linear regression, we essentially predict the value of the dependent variable yi using the score of the independent variable xi, for observation i. β0 is the intercept (a constant term) and β1 is the gradient. Here, β0 and β1 are the coefficients (or parameters) that need to be estimated from the data. Where the subscript i refers to a particular observation (there are n data points in total). In this way, the linear regression model takes the following form: To capture all the other factors, not included as independent variable, that affect the dependent variable, the disturbance term is added to the linear regression model. The disturbance is primarily important because we are not able to capture every possible influential factor on the dependent variable of the model. The relationship is modeled through a random disturbance term (or, error variable) ε. The linearity of the relationship between the dependent and independent variables is an assumption of the model. Linear regression is used to study the linear relationship between a dependent variable (y) and one or more independent variables ( X). As the name suggests, this type of regression is a linear approach to modeling the relationship between the variables of interest. In this article, I am going to introduce the most common form of regression analysis, which is the linear regression. By applying regression analysis, we are able to examine the relationship between a dependent variable and one or more independent variables. Regression analysis is an important statistical method for the analysis of data. Introduction to the core concepts of simple linear regression and OLS estimation Background